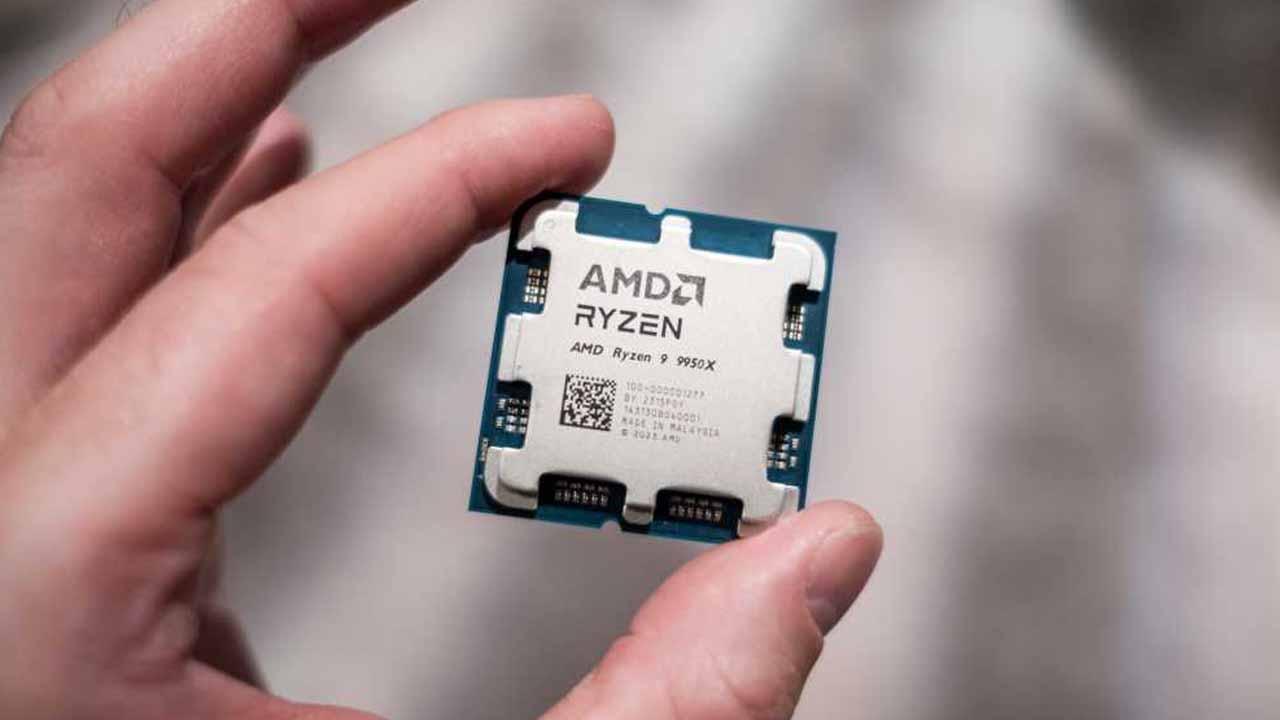

The race for Artificial Intelligence hegemony has almost all chipmakers up in arms, and for the moment that is the case. NVIDIA who wins the war, by far. The company is years ahead of others because precisely it started its research years before others, which gave it such a competitive advantage that it led it to become the most valuable company in the world, with its H100 GPU as a flagship product. However, the small startup Etched has created a chip for AI which, they claim, is 20 times faster than the NVIDIA H100, and it is also Cheaper.

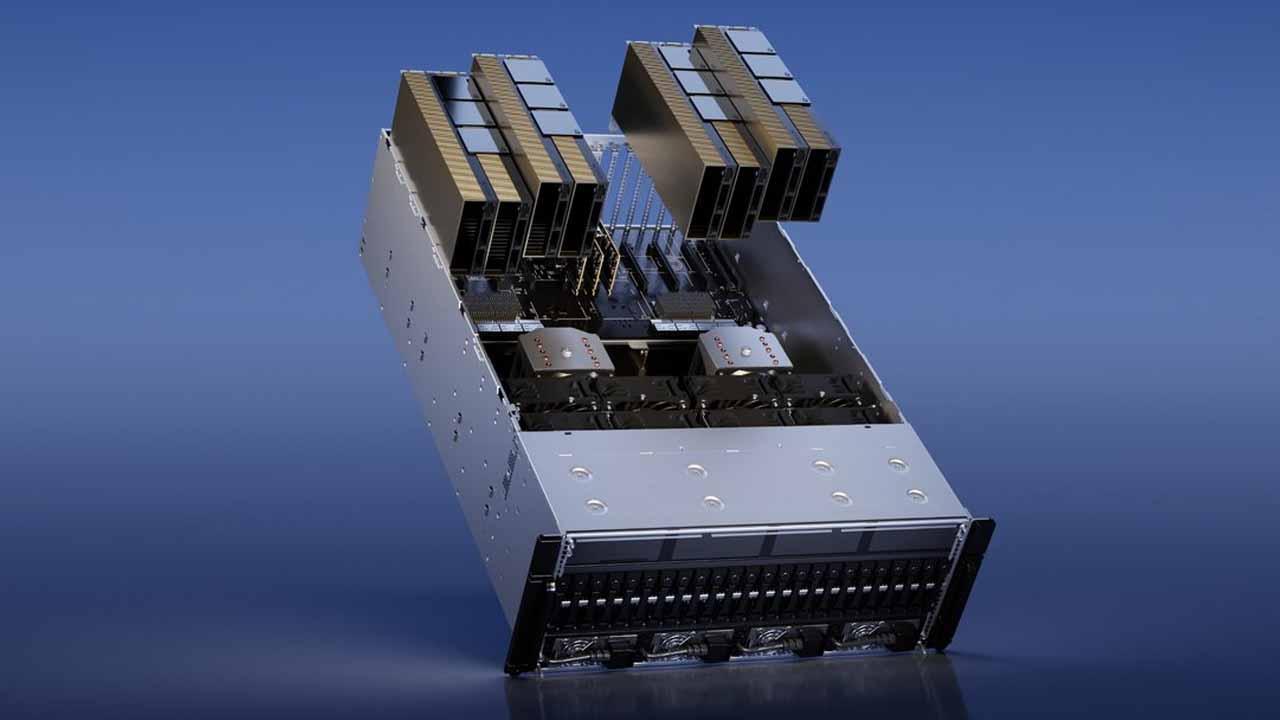

Etched, a startup that makes transformer chips, just announced Swamp, an application-specific integrated circuit (ASIC) that claims to outperform NVIDIA’s H100 in terms of AI LLM inference. According to them, a single server with only 8 of these ASICs match the performance of 160 NVIDIA GPUs H100, meaning data centers could save a lot of money on start-up costs and operational costs, provided Sohu lives up to expectations.

How a startup stands up to NVIDIA in AI chips

According to information provided by the company, current AI accelera tors (whether CPU or GPU) are designed to work with different AI architectures. These different designs mean that the hardware must be able to support different models, such as convolutional neural networks, short-term memory networks, state space models, etc. Because these designs accommodate different architectures, most AI chips devote a large portion of their computing power to programming capability.

Most large language models (LLMs) use matrix multiplication for almost all of their computing tasks, and Etched reports that NVIDIA H100 GPUs use only 3.3% of their transistors for this. This means that 96.7% of silicon is used for other general tasks.

However, Transformer AI architecture has become very popular, and without going any further, it is the most popular LLM today, ChatGPTis based on a transformer model, but it is not the only one since other models like Sora, Gemini, Stable Diffusion and DALL-E also do it.

Etched opted for this model a few years ago, when launching the Sohu project. This chip integrates a transformer architecture into the hardware, allowing more transistors to be allocated for pure AI calculations. So, instead of making a chip capable of adapting to all AI architectures, Etched created a chip specifically designed for transformer models, and that is why it is capable of providing much higher performance than others .

Today, NVIDIA is one of the most valuable companies in the world with record revenues year after year, thanks to the current high demand for AI chips. But this launch could, if they are able to mass produce it according to market needs, threaten NVIDIA’s leadership in AI, especially if companies that use a transformer AI model like ChatGPT and the others we mentioned before decide to settle in Sohu.

Ultimately, efficiency is one of the key aspects to winning this race for AI hegemony, and whoever can run the fastest and most affordable hardware models will emerge victorious.