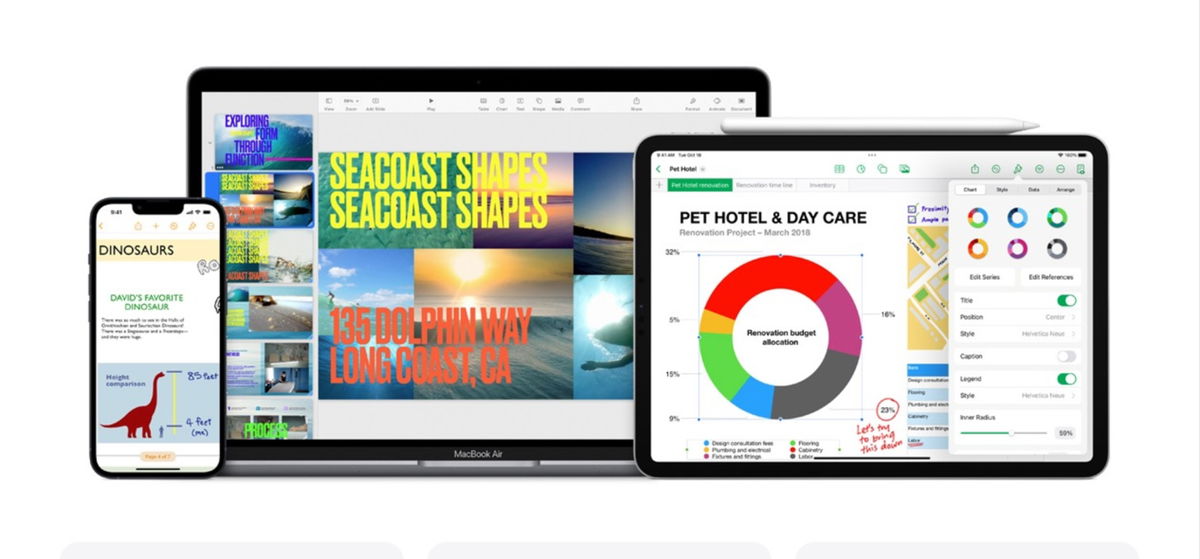

In an unprecedented move, Apple has released the iOS 18.1 beta more than a month before the release of iOS 18. The company is running two betas in parallel: iOS 18 for all devices, and iOS 18.1, iPadOS 18.1, and macOS 15.1 for devices capable of running Apple Intelligence (iPhone 15 Pro and Pro Max, iPad M series, and Mac M series).

Since WWDC in June, it’s been clear that Apple Intelligence is coming in full force, with features rolling out gradually. Now, the timeline has changed, with none of the features coming in the iOS 18 release, some coming in iOS 18.1, others later this year, and still others in early 2025. If you have a device that can run it, here are the Apple Intelligence features you’ll find in iOS 18.1 and how they work in the current beta.

Anywhere you can select and copy/paste text, you can access a new writing tools menu. Select your text, then from the copy/paste pop-up menu, select Writing Tools (you may need to select the > arrow to see more options).

Apple Intelligence can take selected text and read it back, highlighting grammar or punctuation changes and suggesting edits, or simply rewrite it to make it more friendly and casual, more professional, or more concise.

For longer text, the tool can create a summary, a bulleted list of key points, or generate lists or tables from raw text.

Improved Siri

With iOS 18.1, you’ll notice a big change in the way Siri looks but a much smaller change in how Siri works. Summoning Siri on your iPhone or iPad will cause a colorful glow to appear around the edges of your phone and respond to your voice when you speak. You can also double-tap the bottom edge of your phone to bring up a keyboard and enter text for Siri.

But feature improvements are limited. Siri better understands speech that contains “uhs” and “ahs,” or that quickly corrects itself, and has a better sense of the context of the previous command, allowing you to give more natural follow-up commands.

The huge improvement in Siri’s capabilities that you saw at WWDC, with Siri having some sort of contextual knowledge of you as an individual and performing actions within apps, will come later. The personal context update could arrive before the end of the year, but interactivity in apps and on-screen recognition won’t arrive until 2025.

At the top of every email or thread is a “Summary” button that can quickly tell you what an entire email or thread is about.

Mail can also display priority emails at the top of your inbox, and your inbox view will also show a brief summary of the email instead of the first two lines of the message body. These summaries will also appear in notifications on the lock screen or in the notification shade.

messages

When you’re in a text conversation with someone, the keyboard will show smart reply suggestions. This seems limited to new conversations and only when you receive them… If you jump into an old text conversation, you just get the keyboard’s normal word suggestions.

New, unread text messages (and multiple text messages from the same person) are summarized the same way Mail messages are. In your message list, you see a short summary instead of the beginning of the most recent message, and the same goes for notifications on the lock screen and notification bar.

Safari Summaries

When you’re in Safari’s Reader view, you’ll notice a new “Summarize” option at the top of the page that creates a one-paragraph summary of what’s on the page.

Call recording

Call recording is now integrated into the Phone app and Notes. Simply tap the call recording button in the top left corner of the call screen. A short message will play to let both parties know that the call is being recorded. Once the call ends, you’ll find the recording saved as a voice note in Notes, along with an AI-generated transcript.

Reduce interruptions Concentration

The new Reduce Interruptions focus mode is here in iOS 18.1. It uses onboard intelligence to review notifications and incoming calls or texts, letting through the ones it deems important and muting the rest (they still come through, but are just muted).

However, any people or apps you have set to always allow or never allow will still follow those rules. You can find them with all other Focus modes, in Settings > To focus or by pressing To focus in the control center.

Pictures

The Photos interface is getting a complete overhaul in iOS 18, and early reviews from testers are mixed. The new AI features coming to iOS 18.1 should still be a hit.

Natural language works in search, so you can casually search for people, places, and objects in your photos. Enhanced search will also find specific moments in videos. You can also create memories with natural language and then edit them in Memory Maker.

Note: The app may need to index your photo library overnight before these advanced natural language search tools work properly.

Features coming to iOS 18.2 or 18.3

Once this first wave of Apple Intelligence features is available in iOS 18.1, we won’t have to wait long for the next round of improvements. More features should be available by the end of the year, likely in iOS 18.2, but possibly in iOS 18.3 as well.

The image-generating features expected with the initial release now appear to be coming to iOS 18.2 or 18.3 as well. This includes the “Clean Up” tool in Photos to remove unwanted background objects, the Image Playground app to experiment with AI image generation in a handful of styles, and Genmoji, to create Memoji-inspired images of people.

Delayed features also include the much-vaunted ChatGPT integration. If you actually want generate body text and not only rewrite them, you will need them. This will also give Siri new capabilities as it can forward (with permission) questions and requests to ChatGPT.

Features coming to iOS 18.4 next year

If you’re looking forward to a much more powerful and capable Siri, you’ll have to wait until spring 2025. These features are currently targeted for iOS 18.4, which will enter beta early this year and likely be released around March.

This is when Siri will have the ability to “see” what’s on your screen and act accordingly, and perhaps more importantly, when it will be able to perform many actions within apps. This requires a massive expansion of “app intents” (the hooks developers use to integrate with Siri), which will require a lot of testing and app updates.

Siri will also have the ability to create a semantic index of your personal context, including things like family relationships, frequently visited locations, and more. It’s unclear whether this feature will be available by the end of the year or part of Siri’s big spring update.

Table of Contents