Android phone cameras are getting better and better, but no matter how much better the sensors are, there are physical limitations that prevent you from always getting the best result. For this reason, Google has always focused more on improving the algorithms than on the sensors of Pixel mobiles.

[Google vuelve a hacer magia con tus fotos: así es la nueva función que llega a todos los Pixel]

It’s easy to see why. Cell phone sensors have space limitations that prevent them from competing with DSLRs or professional cameras; therefore the logical thing is to try to improve the data they get using math and everything we know about how images are captured. Although, interestingly, the latest breakthrough does not come from these Google developments.

Much sharper night shots

In fact, the study (pdf) published by Cornell University by researchers from Google Research was originally an attempt to fix a tool that has nothing to do with Pixel phones. It’s called NeRF, and it’s an image synthesizer capable of creating three-dimensional scenes from photographs; It’s a landmark project, even if something went unnoticed when it was presented last May.

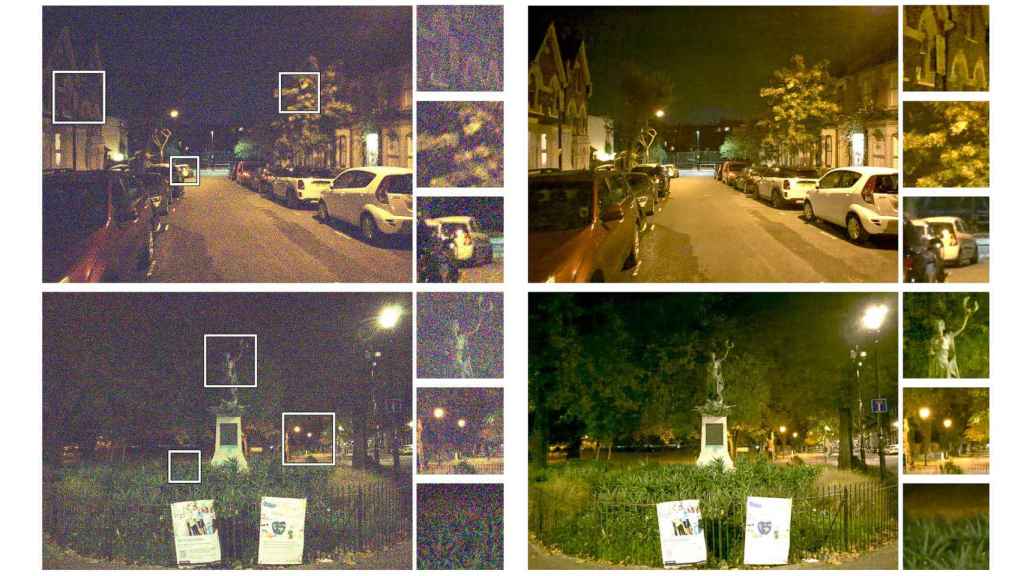

During the development of this project, its creators encountered a lot of frustration: NeRF works much better with photos taken during the day or with very good lighting, while photos at night or with low light were very problematic and do not were not yielding acceptable results in the Program. So they focused on creating RawNeRF, an AI-based algorithm that would fix these photographs in order to more easily create 3D environments.

The Google Research project succeeded in removing noise from night photos

So, almost unwittingly, these developers managed to create an amazing solution to clarify and remove blemishes from night photos. The big culprit is ‘noise’, those ‘dots’ that appear in photos when there’s not enough light reaching the sensor; although there are already filters that remove these dots, they do so by sacrificing detail in the photo, which ends up looking like it’s been painted over due to the lack of sharpness.

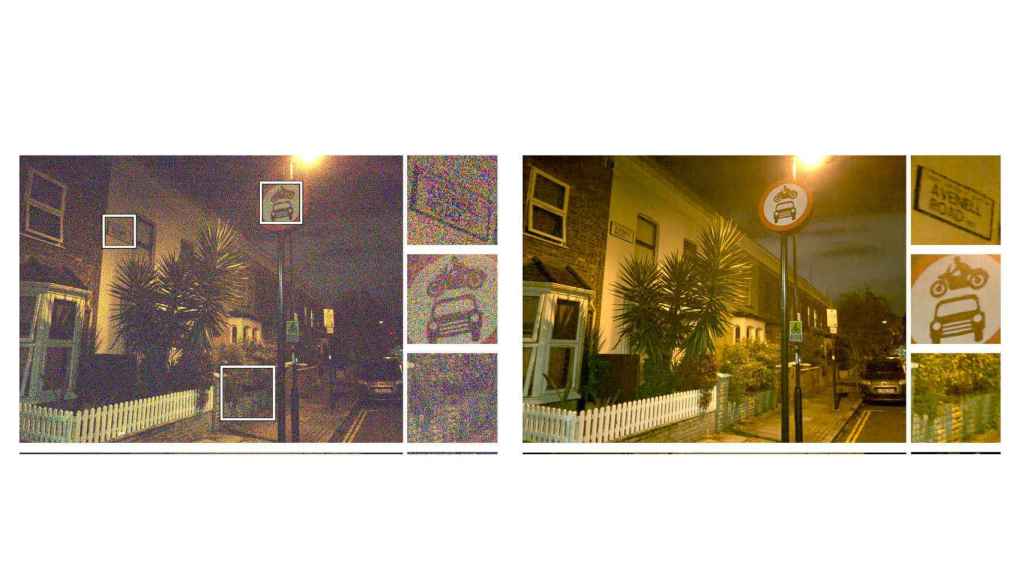

Results of new algorithms developed in Google Research

The realization of this project was that the AI is able to eliminate noise without sacrificing sharpness, which we might think was impossible, but they obviously achieved. In fact, the result is better than programs specifically dedicated to this task, even if it was not the initial objective of the project. The resulting images are rendered in the HDR color space, so users don’t lose the ability to tweak aspects like exposure or colors.

Although Google has yet to confirm if it will use this technology in Android, being a project that is part of Google Research, we imagine that we will at least notice improvements in the future when we take pictures.

You may be interested

Follow the topics that interest you