The arrival of iOS 15 has brought a large repertoire of new features to protect children from today’s big problems. In fact, three major groups of features have been presented. The two that are available today are communication security in iMessages and, on the other hand, warnings about these issues in Siri, Spotlight and Safari. However, Apple’s flagship feature was scanning users’ iCloud photos for the purpose of finding child pornography. After several months of postponement, the project was abandoned by Apple.

Photo scanner in search of CSAM, the Apple project

Apple’s flagship tool was notorious for its ethical and privacy dilemma that it raised when it was introduced over a year ago. The purpose of the Apple tool was scan users’ iCloud photos for child pornography. This term is not used officially but the term used was CASS (Child Sexual Abuse Material or Child Sexual Abuse Material).

Some child protection features currently available on iOS

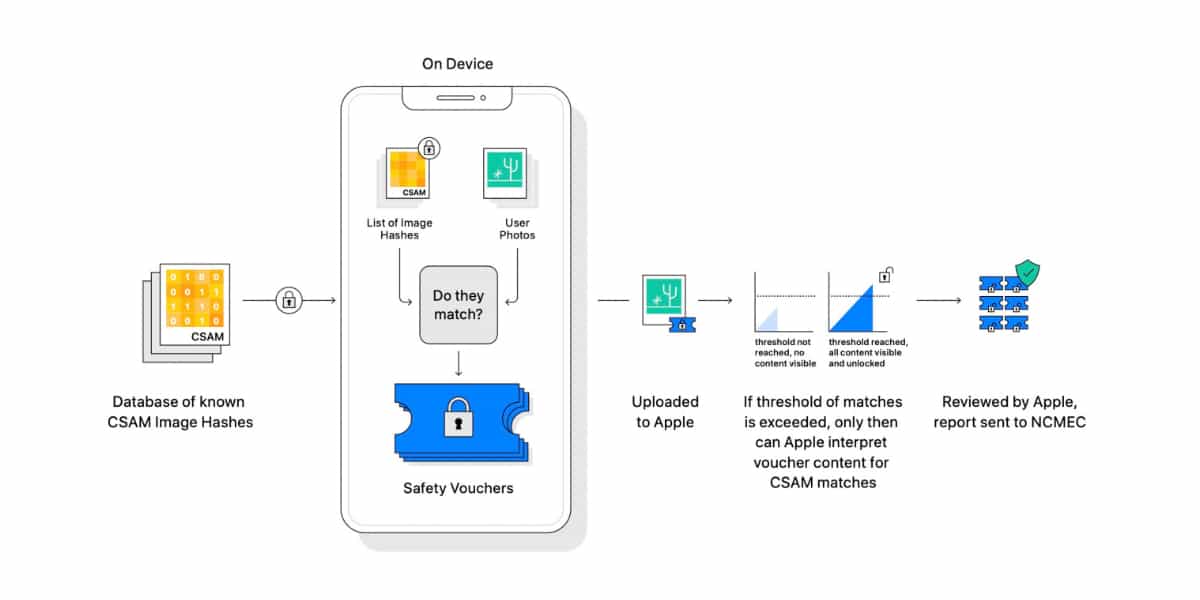

To do this, those of Cupertino joined forces with NCMEC, the National Center for Missing & Exploited Children, USA. This center has a large database with child pornography or CSAM images. each of these pictures bears a signature or chop it does not vary That is, if an image with sensitive content has the same signature as the user, the alarms will go off.

Before an image is stored in iCloud Photos, that image is checked against the unreadable set of known CSAM signatures on the device. This matching process is based on a cryptographic technology called private set intersection, which determines if there is a match without revealing the result. Private Set Intersection (PSI) allows Apple to know whether an image hash matches known CSAM image hashes, without knowing about incompatible image hashes. PSI also prevents the user from knowing if there is a match.

Related article:

How Apple’s New Anti-Child Pornography System Works (And How It Doesn’t)

Apple assured that the probability of a false alarm being given was minimal since it was necessary to have more than 30 photos including signatures or chop were identical to the CSAM database for Apple to intervene. However, both the technology, ethics and security community and Apple employees themselves caused an avalanche of criticism that resulted in the feature being delayed and not seeing the light of day in iOS 15.

Child pornography photo scanning project halted

A few hours ago a statement from Apple was posted on WIRED where announced the abandonment of the development of this iCloud photo scanner for child pornography:

Following extensive expert consultation to gather feedback on the child protection initiatives we proposed last year, we are deepening our investment in the communications security functionality we made available for the first time. times in December 2021. Additionally, we have decided not to proceed with our previously proposed CSAM. detection tool for iCloud Photos. Children can be protected without companies tracking their personal data, and we will continue to work with governments, children’s advocates and other companies to help protect young people, preserve their right to privacy and make the Internet a safer place for children and for everyone. from U.S.

So, Apple is abandoning a project started a little over a year ago. All this caused by the deluge of security problems and criticisms that the measure has had since its presentation. In fact, now those in Cupertino are trying to whitewash the issues by trying to Invest at the source, try to prevent the production of child pornography invest in other types of measures such as iMessage’s communications security feature.