One of the biggest challenges when designing a processor is the power consumption that occurs when processing and moving information. The problem arises when, in recent years, all of the engineering effort has not been focused on getting the fastest threads, but on communicating enough to get processing fast enough.

Whether a computer uses the Harvard or Von Neumann model, it will need memory that the processor accesses in order to function. In the simplest systems, this memory is on a separate chip and must be accessible via an interconnect or cable. Well, the big problem arises when we take into account a series of basic principles.

The first and most important is the fact that the resistance in a cable increases the longer it is, if we take Ohm’s law into account, we will know that the voltage is the result of multiplying the resistance by the current. . What does this have to do with semiconductors? Let’s not forget that these are small electrical circuits and therefore if the distance at which the data has to operate increases, the power consumption will increase.

The reason is found in the basic formula for calculating energy consumption, which is: P = V2* C * f. Where V is the voltage, C is the load capacity that the semiconductor can withstand and f is the frequency. Well we have seen how the voltage increases with resistance and we have to add that it also increases with clock speed.

Vertical interconnections

Now that we have the basic principle, we find that the solution is to shorten the cables to bring memory closer to processing. Initially we find a limitation and it is none other than the communication between CPU and RAM occurs horizontally on the PCB and the corresponding communication interface routing on each end, so that at some point , we will not be able to continue to reduce the distance.

Since the power consumption increases exponentially with the clock speed, then the best solution is to increase the number of interconnects existing in the communication interface, but we are limited by its size and since it is located on the perimeter of the processor this means increasing its size, which makes it more expensive to manufacture. The solution? Place said memory above the chip, so that we can have matrix wiring.

The two things combined allow us to increase the number of interconnects, which in order to achieve the same b andwidth allows us to reduce the clock speed, but we also have the advantage of having reduced the wiring distance communication, so we also reduce consumption. point. The result? Reduce the energy cost of data traffic by 10%.

The LLC problem and consumption

In a multi-core design, let’s talk about a CPU or GPU, there is always a cache called LLC or last level which is farthest from the processor, but the closest to memory, its job is:

- Give consistency in the addressing of the memory of the different cores that are part of it.

- Allow communication between the different cores without having to do it in RAM, thus reducing consumption.

- It allows the different hearts that are part of it to access a common memory.

The problem arises when in a design we decide to separate multiple cores from each other to create multiple chips, but without losing the functionality as a whole for each of them. The first problem we faced? By separating them, we lengthened the distance and with it the resistance of the wiring, so the power consumption increased accordingly.

Interconnection implementations

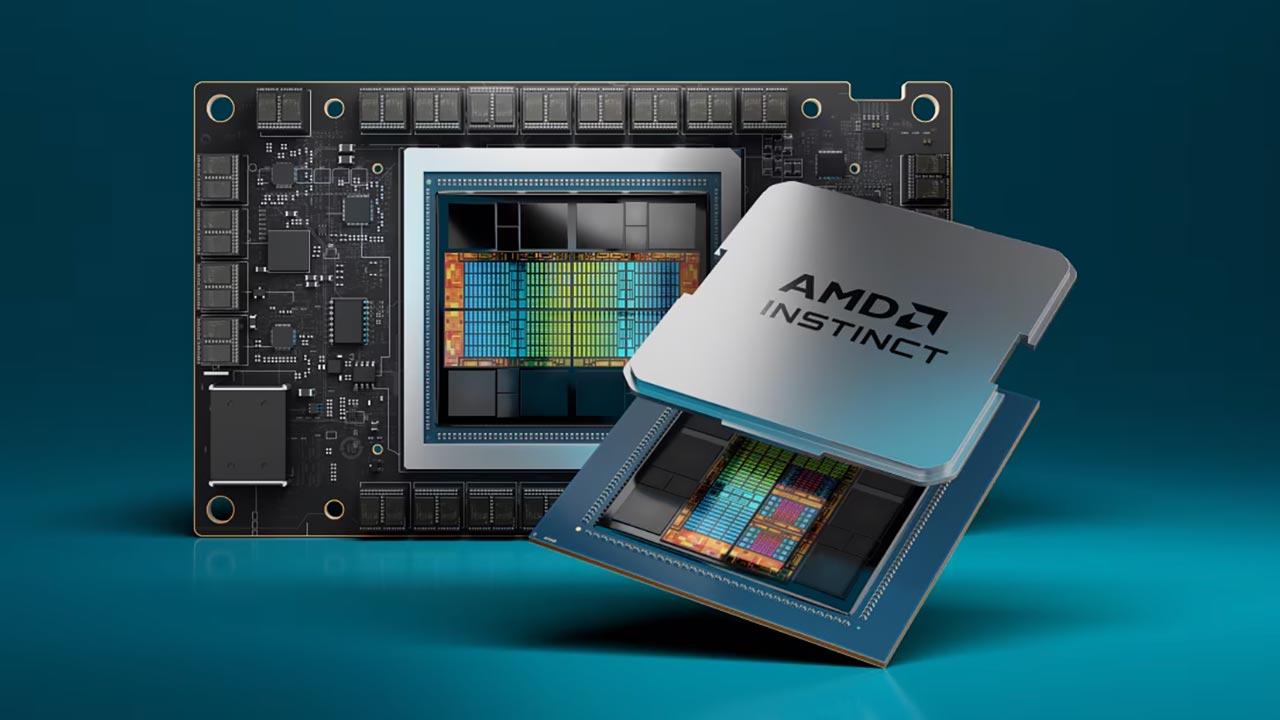

This at certain levels of consumption is not a problem, but in a GPU it is the case and suddenly we see that we cannot create a graphics processor composed of chiplets using traditional communication methods. Hence the development of vertical intercoms to communicate the different chips, which means that they have to be wired vertically with a common intercom base that we call Interposer.

Multi-chip designs exist when there is a need to achieve a level of complexity where the size of a single chip is counterproductive in terms of manufacture and cost, but here vertical interconnections are usually made between the different elements above the interposer with it. But this is not as efficient as a direct interconnection, also because of the relative intercommunication distance.

On the other hand, when we talk about an implementation with a small scale chip, we end up opting for stacking two or more chips on top of each other and intercommunicating them vertically. It can be two memories, two processors, or the combination of memory and processor. We already have several cases of this in current hardware, such as HBM memory or 3D-NAND Flash, the already retired Lakefield from Intel and Zen 3 cores with V-Cache from AMD.

So the 3DIC is not science fiction, it’s something that we have had for several years in the hardware world and which consists in creating integrated circuits in which the interaction between the components is done vertically. instead of horizontally. This brings the advantages that we mentioned previously on vertical interconnections in the face of energy consumption.