It’s no secret that one of the most important points about a CPU’s performance is its interaction with RAM memory, although it is true that not all running processes have the same requirements. in terms of bandwidth and latency requirements, facing the high performance computing market, this is important.

The Roofline model

To understand the relationship between memory usage and different algorithms, we need to talk about the so-called Roofline model and it is based on measuring the arithmetic intensity of an algorithm. Which is measured by dividing the number of floating point operations required to perform it by the number of bytes of RAM bandwidth.

It is here that we enter the two possible solutions for algorithms which require a high arithmetic intensity and therefore a high bandwidth, which are:

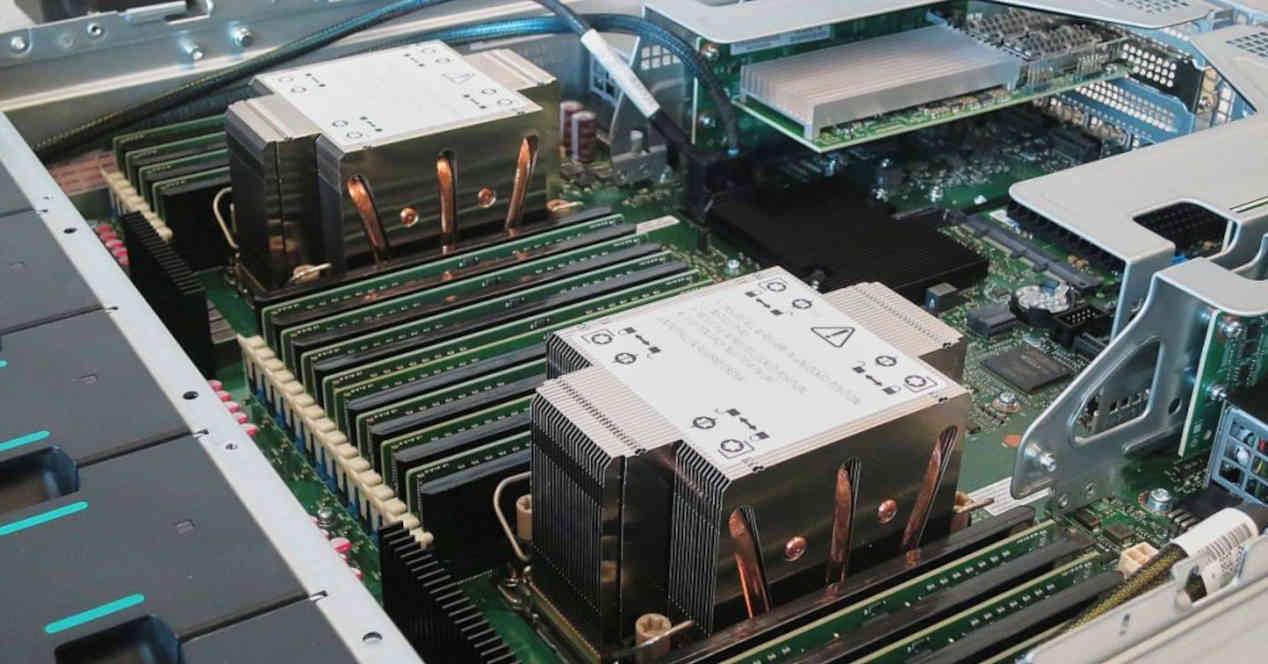

- The use of high bandwidth RAM, being the most suitable HBM2E for its low latency and high bandwidth. This solution is used by the Fujitsu A64FX processor based on ISA ARM, as well as future Intel Xeon Sapphire Rapids.

- The considerable increase in the last level cache, which is closest to RAM and the one with the largest size. In AMD’s case, this is achieved by placing an SRAM chip through silicon or TSV pathways, which they dubbed V-Cache.

The goal is therefore simply to keep the data flow high enough to obtain the best possible performance.

Why aren’t there any AMD processors that use HBM2E?

You will have noticed that there is no EPYC or Threadripper processor that uses HBM2E memory in the roadmap, it is because AMD has decided to opt for V-Cache in order to give greater arithmetic intensity to some algorithms that are usually run in the high performance computing world and it is very likely that we will see future processors with not just one SRAM stack like V-Cache but several.

However, the two ideas can be combined with each other, on the one hand a 3DIC structure and on the other hand a 2.5DIC with an interposer below. We cannot therefore exclude that AMD will use HBM2E memory at the server level in the future, but we cannot ensure this as long as it is not in its roadmap.

Creating a processor that uses an interposer makes it extremely expensive to manufacture, as few customers use these specialized solutions. In addition, adding more steps in the manufacturing process further increases the costs and if we add the expensive HBM2E memory, the AMD solution is ultimately much cheaper. If anything, we think Intel will do the same in its future processors, since it has Foveros technology.